Semantic Search Examples: 7 Insights into AI Search Trends

Unlocking Meaning: The Power of Semantic Search

Stop relying on simple keywords. This listicle provides seven powerful semantic search examples to transform how you discover information. Learn how AI-powered tools understand the meaning behind your queries—not just the words themselves—to deliver superior results. Whether you're a marketer, researcher, or entrepreneur, understanding these concepts is crucial for staying ahead. Explore examples like Google's BERT, OpenAI embeddings, and vector databases like Pinecone and Weaviate, and see how semantic search can revolutionize your workflow. Dive in and discover the future of search.

1. Google's BERT-Powered Search

In the ever-evolving landscape of search engine optimization (SEO) and online content creation, understanding the intricacies of how search engines interpret queries is paramount. One of the most significant advancements in search technology is Google's implementation of BERT (Bidirectional Encoder Representations from Transformers), a revolutionary model that has fundamentally changed how we interact with search. BERT-powered search represents a giant leap forward for semantic search examples, moving beyond simple keyword matching and diving into the nuanced world of natural language processing. This means Google now understands the meaning behind your search, not just the individual words.

Before BERT, search engines primarily relied on keyword matching. If you searched for "red shoes for women," Google would look for pages containing those specific words. However, this often led to irrelevant results, especially for complex or conversational queries. Imagine searching "can you get medicine for someone pharmacy." A keyword-based search might struggle with the implied meaning. BERT, however, understands the context and intent, recognizing that you're asking about picking up prescriptions for someone else. This ability to decipher the true meaning of a query is the core strength of BERT.

BERT's magic lies in its bidirectional approach. Unlike previous models that processed text linearly (either left-to-right or right-to-left), BERT analyzes words in relation to all other words in the sentence, both before and after. This bidirectional context understanding is crucial for deciphering the nuances of language, especially the roles of prepositions like "to" and "from." For example, a search for "flights to London from Paris" is accurately interpreted by BERT, whereas older models might have confused the origin and destination.

This sophisticated natural language processing allows BERT to handle complex, conversational queries effectively. Searching for something like "how to catch a fish without a rod" now yields practical and relevant results. BERT understands the intent—catching a fish—and the constraint—without a rod—providing answers that truly address the user's need. This is a game-changer for content creators, as it emphasizes the importance of creating comprehensive, contextually rich content that answers real-world questions.

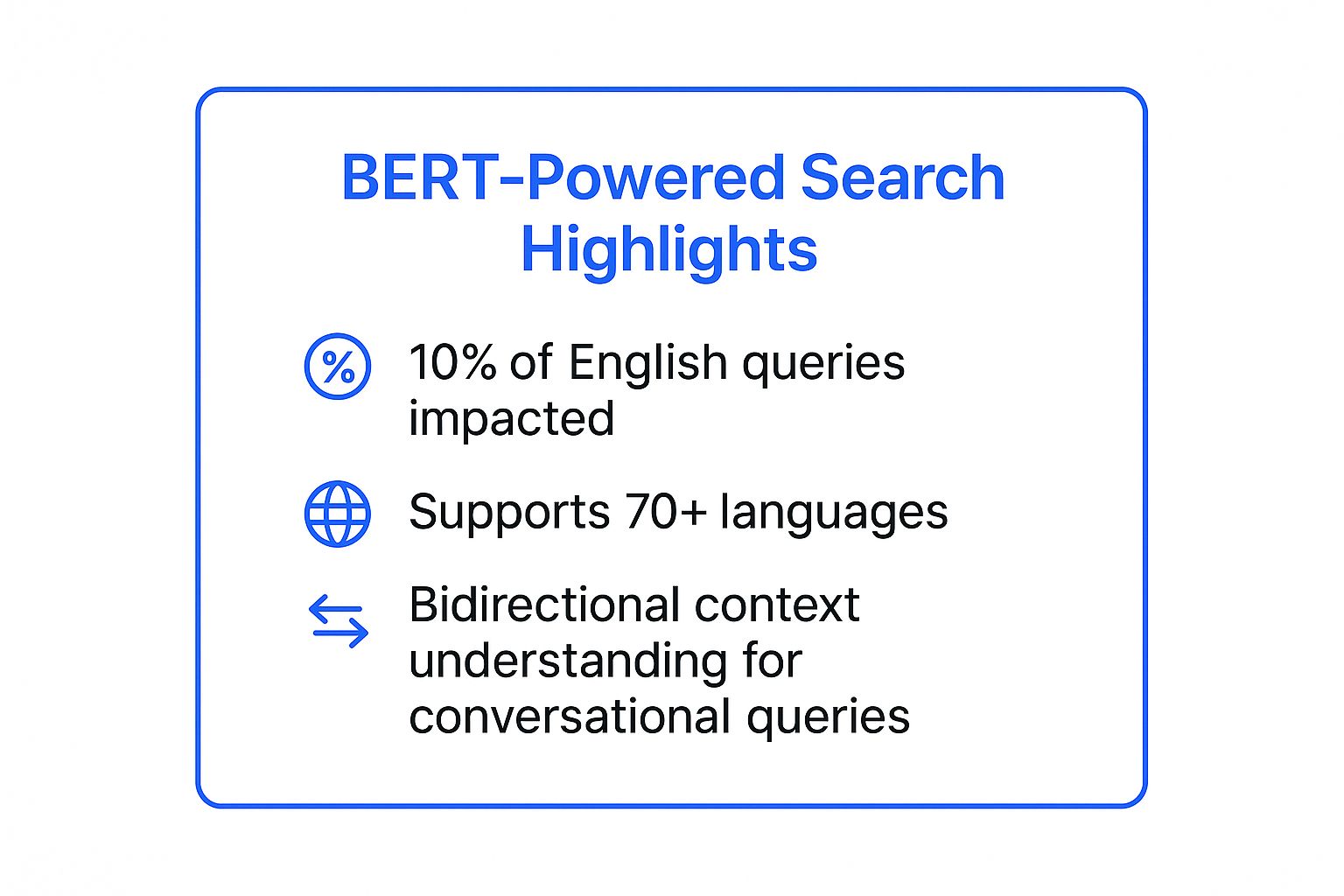

The following infographic summarizes some key data points highlighting the impact and reach of BERT-powered search.

As the infographic highlights, BERT's impact is widespread, affecting a significant portion of English queries and supporting over 70 languages. This widespread adoption underlines the importance of optimizing content for semantic search.

While BERT offers significant advantages, it also has some limitations. Its sophisticated algorithms require considerable computational resources. Also, the results can be less predictable than traditional keyword-based searches, making it slightly more challenging for SEOs to guarantee top rankings. Finally, BERT may struggle with very new or niche terminology that hasn't yet been widely used online.

BERT-Powered Search Highlights – Key Takeaways:

- Impact: BERT has significantly impacted a considerable percentage of English queries, demonstrating its widespread influence on search results.

- Multilingual Support: With support for over 70 languages, BERT's ability to understand context transcends linguistic barriers.

- Conversational Queries: The bidirectional context understanding is particularly effective for deciphering the nuances of conversational search queries.

These key takeaways underscore the need for content creators to adapt their strategies. To leverage the power of BERT, focus on natural language content creation. Optimize for question-based queries and use structured data markup to enhance semantic understanding. By creating content that answers real-world questions in a clear and comprehensive way, you can significantly improve your visibility in the age of semantic search.

This shift towards semantic search signifies that Google is prioritizing user intent over strict keyword matching. Therefore, understanding and adapting to BERT is essential for anyone involved in digital marketing, content creation, or online research. By embracing a semantic-focused approach, you can ensure your content remains discoverable and relevant in the evolving search landscape.

2. Elasticsearch with Vector Search

Unlocking the true potential of search lies in understanding the meaning behind the words. Traditional keyword-based search often falls short, failing to capture the nuances of human language. This is where the power of semantic search comes into play, and Elasticsearch with vector search stands as a leading solution in this domain. This approach elevates search from simple keyword matching to a sophisticated understanding of context and intent, delivering significantly more relevant and insightful results.

Elasticsearch, renowned for its robust and scalable search capabilities, integrates seamlessly with vector search technology to provide a powerful platform for semantic search examples. This combination allows you to move beyond simple keyword matching and delve into the semantic meaning of your data. How does it work? At its core, Elasticsearch with vector search utilizes dense vector representations of text, generated by machine learning models. These vectors capture the semantic essence of words and phrases, allowing the search engine to identify similar content even when the exact keywords aren't present. Imagine searching for "healthy recipes for dinner" and getting results for "nutritious evening meals" – this is the power of semantic understanding.

Elasticsearch’s vector search capabilities are built upon a foundation of key features designed for optimal performance and flexibility. Dense vector storage and search are at the heart of the system, enabling efficient similarity calculations. The platform's hybrid search functionality allows you to combine traditional keyword search with the power of semantic search, providing comprehensive results. Real-time indexing and search ensure your data is always up-to-date, while the scalable distributed architecture allows Elasticsearch to handle massive datasets with ease. Finally, the seamless integration with machine learning models makes it easy to incorporate custom embeddings for even greater precision.

The benefits of this approach are compelling, making it a strong contender in the realm of semantic search. The highly scalable and performant nature of Elasticsearch allows it to handle large-scale deployments with speed and efficiency. The flexible hybrid search approach enables you to tailor your search strategy to your specific needs. A strong ecosystem and community support provide valuable resources and assistance, while real-time search capabilities ensure your users always have access to the most current information.

Several industry giants have successfully implemented Elasticsearch with vector search to enhance their search functionalities. Netflix, for example, utilizes Elasticsearch for content recommendation and search, delivering personalized viewing experiences to millions of users. Uber leverages its power for location-based semantic search, improving the accuracy and relevance of ride requests. GitHub employs Elasticsearch for code search with semantic understanding, enabling developers to find relevant code snippets even when the exact keywords don't match. These real-world examples highlight the versatility and effectiveness of Elasticsearch in diverse applications.

While the advantages are significant, there are some drawbacks to consider. Setting up and configuring Elasticsearch with vector search can be complex, requiring expertise in vector embeddings. Resource requirements can be substantial for large-scale deployments, demanding careful planning and resource allocation. However, the benefits often outweigh these challenges, particularly for organizations seeking a robust and scalable semantic search solution.

To maximize the effectiveness of your implementation, consider these actionable tips: Start with pre-trained embeddings before investing in custom models. Combine semantic and keyword search for the most comprehensive results. Optimize vector dimensions based on your specific use case. Utilize approximate nearest neighbor search for enhanced performance. By following these best practices, you can unlock the full potential of Elasticsearch with vector search.

Elasticsearch with vector search deserves its place in this list due to its powerful combination of scalability, performance, and semantic understanding. Whether you're a digital marketer seeking to improve content discovery, a researcher navigating complex datasets, or a small business owner striving to enhance customer experience, Elasticsearch with vector search provides a robust and versatile solution for unlocking the true potential of your data. It’s a game-changer for anyone seeking to move beyond keyword matching and embrace the power of semantic understanding. While it requires some technical expertise, the rewards in terms of search relevance and user experience are well worth the investment. If you are looking for a powerful and scalable semantic search solution, Elasticsearch with vector search should be at the top of your list.

3. OpenAI's Embedding-Based Search

Unlocking the true potential of semantic search, OpenAI's embedding-based search offers a powerful approach to understanding and retrieving information. This method leverages advanced language models to transform text into high-dimensional vector representations, also known as embeddings. These embeddings capture the semantic meaning of the text, allowing for a much more nuanced and accurate search experience compared to traditional keyword-based methods. By comparing the vector representations of a search query and the available documents, the system can identify relevant results even if they don't share exact keywords, focusing instead on the underlying meaning and context. This opens up a world of possibilities for finding precisely what you're looking for, regardless of how it's phrased.

Here’s how it works: OpenAI's powerful language models analyze text input, dissecting its meaning and context. This analysis translates into a unique vector, a numerical representation capturing the essence of the text. When a user performs a search, their query is also transformed into a vector. The system then compares the query vector to the pre-calculated document vectors, using a similarity measure like cosine similarity. The documents with vectors closest to the query vector are deemed most relevant and returned as search results. This method allows for a deep understanding of relationships between words and concepts, going far beyond simple keyword matching.

The benefits of OpenAI's embedding-based search are numerous. Its state-of-the-art semantic understanding ensures highly accurate and relevant search results. The readily available API facilitates easy integration into existing systems, saving developers valuable time and resources. OpenAI's consistent performance and regular model updates provide a reliable and evolving platform for building semantic search applications. These high-quality text embeddings, with their 1536 dimensions, capture subtle nuances in language, making it a powerful tool for a wide range of applications across various text lengths and languages. Real-world examples of successful implementations include Notion's AI-powered search, enabling users to effortlessly find information across their workspace. Shopify uses it to power its product recommendation system, offering customers personalized shopping experiences. Even help desk systems are leveraging this technology to quickly surface the most relevant articles based on user questions, streamlining customer support.

While the advantages are compelling, it's important to consider the potential drawbacks. Using OpenAI's API comes with associated costs. The platform offers limited customization options, and users require an external vector database for storing embeddings. Latency can also be a concern for real-time applications. However, the powerful capabilities and ease of integration often outweigh these limitations.

For those looking to implement OpenAI’s embedding-based search, consider these practical tips: For large documents, break them down into smaller, manageable chunks before generating embeddings. This improves accuracy and reduces processing time. Caching embeddings can significantly reduce API costs by storing and reusing previously generated embeddings. Employ cosine similarity as a robust metric for comparing embeddings and identifying semantic relationships. Finally, combining embedding-based search with traditional metadata filtering can further refine results and enhance search precision.

So, when should you consider OpenAI's embedding-based search? This approach excels in situations where understanding the intent and context of a query is crucial. If you’re dealing with complex or ambiguous language, or if traditional keyword search falls short, OpenAI's embedding-based search offers a powerful alternative. Whether you're a digital marketer seeking to personalize content recommendations, a researcher delving into vast knowledge bases, or a small business owner looking to enhance your customer support, this technology provides a powerful toolkit for navigating the complexities of information retrieval. Its remarkable ability to understand the true meaning behind words empowers you to connect with your audience, uncover hidden insights, and deliver exceptional user experiences.

4. Pinecone Vector Database Search

Are you struggling to find the information you need amidst mountains of data? Traditional keyword search often falls short when dealing with the nuances of human language. Enter semantic search, a powerful technique that understands the meaning behind your queries. And if you're serious about implementing semantic search at scale, Pinecone vector database search deserves a prominent place in your toolkit. This cutting-edge technology allows you to leverage the power of vector embeddings to unlock deeper insights and deliver truly relevant search results. It’s a game-changer for anyone working with large datasets, from digital marketers analyzing customer feedback to researchers exploring complex scientific literature.

Pinecone (www.pinecone.io) is a fully managed vector database specifically engineered for the demands of semantic search. Instead of relying on exact keyword matches, it utilizes vector embeddings, mathematical representations of data that capture semantic relationships. This means that searching for "best Italian restaurants near me" will also return results for "top-rated trattorias in my area," even if the keywords don't perfectly overlap. This nuanced understanding of language is what sets semantic search, and Pinecone in particular, apart from traditional search methods.

So, how does it work? First, you transform your data – be it text, images, or audio – into vector embeddings using a model like Sentence-BERT or CLIP. These vectors are then stored within Pinecone's highly optimized database. When a user initiates a search, their query is also converted into a vector, and Pinecone rapidly compares it to the stored vectors, returning the most similar results based on various distance metrics like cosine similarity. This process happens in real-time, enabling lightning-fast semantic search even across massive datasets.

The benefits of using Pinecone for semantic search are compelling. As a fully managed service, it eliminates the complexities of infrastructure management, allowing you to focus on building your application, not managing servers. Its architecture is optimized for vector operations, ensuring blazing-fast query performance at scale. Further enhancing its utility is built-in metadata filtering, allowing you to combine vector search with traditional filtering techniques for highly refined results. Imagine searching for "red running shoes" and then filtering by price range or brand – Pinecone empowers you to do just that.

Several prominent companies are already leveraging Pinecone's power. Gong.io uses it to enhance conversation intelligence, enabling sales teams to quickly surface relevant insights from customer calls. Ramp utilizes Pinecone for financial document search, streamlining complex financial analysis. And Expedia employs this technology to power its travel recommendation systems, providing personalized travel suggestions based on user preferences. These examples demonstrate the versatility and scalability of Pinecone across various industries and use cases.

While Pinecone offers a robust and efficient solution, it's crucial to be aware of potential drawbacks. As with any managed service, there are inherent vendor lock-in concerns. Furthermore, costs can scale significantly as your data volume and query frequency increase. Finally, customization of the underlying algorithms is limited, which might be a constraint for certain highly specialized applications.

To maximize your success with Pinecone, consider these actionable tips:

- Design your metadata schema carefully: Well-structured metadata is essential for efficient filtering and retrieval. Consider the specific attributes you'll need to filter on and design your schema accordingly.

- Monitor query performance and optimize index settings: Pinecone offers various index settings that can impact query speed. Regularly monitor your performance and adjust these settings to ensure optimal efficiency.

- Use namespaces to organize different data types: Namespaces allow you to logically separate different datasets within Pinecone, improving organization and performance.

- Implement proper error handling for API calls: Robust error handling ensures the resilience of your application and provides a graceful user experience.

Pinecone is a compelling solution for implementing semantic search examples, particularly when dealing with large datasets and complex queries. Its managed service model, combined with its focus on speed and scalability, makes it a strong contender for anyone seeking to unlock the power of semantic search. By understanding its strengths and limitations, and by implementing the tips outlined above, you can leverage Pinecone to build powerful, intelligent search experiences that deliver real value to your users.

5. Microsoft Cognitive Search: Unleashing the Power of AI-Driven Search

In today's data-driven world, finding the information you need quickly and efficiently is crucial. Traditional keyword-based search often falls short, especially when dealing with large volumes of unstructured data. This is where semantic search steps in, understanding the intent behind a search query and the context within the content itself. Microsoft Cognitive Search (formerly Azure Search) stands out as a powerful example of semantic search in action, offering a compelling solution for individuals and businesses looking to unlock the true potential of their data. This makes it a critical tool for digital marketers, content creators, researchers, entrepreneurs, and students alike, anyone who needs to quickly and efficiently extract meaningful information from complex datasets.

Microsoft Cognitive Search goes beyond simple keyword matching. It integrates cutting-edge AI capabilities to understand the meaning and relationships within your data, providing more relevant and insightful search results. How does it achieve this? Through a sophisticated pipeline of cognitive skills, including natural language processing, optical character recognition, and image analysis. These skills allow Cognitive Search to extract key information from various data sources like documents, images, and even audio files, transforming unstructured data into structured, searchable information. This translates to finding precisely what you're looking for, even if the exact keywords aren't present in the document.

Imagine searching for information on "car accident claims." A traditional search engine might only return results containing those exact words. However, Microsoft Cognitive Search, leveraging its semantic understanding, could also surface documents related to "vehicle collision insurance," "auto incident reports," or even "road traffic accident compensation," significantly broadening the scope and relevance of the search results.

The power of Microsoft Cognitive Search is evident in its successful implementation across various industries. Progressive Insurance, a major player in the insurance sector, utilizes Cognitive Search to streamline claims processing. By enabling employees to quickly access relevant claims documents, they significantly reduce processing time and improve customer satisfaction. H&R Block, the renowned tax preparation service, employs Cognitive Search to efficiently process tax documents, simplifying a traditionally complex and time-consuming process. Even the NHS, the UK's National Health Service, leverages the platform to sift through vast amounts of medical literature, accelerating research and enhancing patient care. These examples highlight the versatility and effectiveness of Microsoft Cognitive Search in diverse and demanding environments.

So, when should you consider implementing Microsoft Cognitive Search? If you're dealing with large volumes of unstructured data, struggling with traditional keyword search limitations, or seeking to improve search relevance and efficiency, then Cognitive Search might be the perfect solution. It's particularly valuable for organizations needing to unlock insights from various data sources, including documents, images, and media files. The platform's integration with the broader Microsoft ecosystem offers further benefits, particularly for organizations already leveraging Azure services.

While Microsoft Cognitive Search presents compelling advantages, it’s crucial to be aware of its limitations. Its close ties to the Microsoft Azure ecosystem can be a constraint for organizations preferring other cloud providers. Additionally, the platform’s comprehensive features can be overwhelming for simpler use cases, potentially adding unnecessary complexity. Finally, the pricing structure, while scalable, can become substantial for large-scale operations.

To maximize the benefits of Microsoft Cognitive Search, consider these actionable tips: First, leverage the built-in cognitive skills before investing in custom development. These pre-built skills can handle a wide range of tasks, saving you time and resources. Second, configure semantic settings to enhance query understanding and ensure more relevant results. Third, implement robust indexing strategies for large datasets to optimize search performance. Finally, take advantage of the integrated security features to protect your valuable data.

Microsoft Cognitive Search represents a significant step forward in the evolution of search technology. By combining traditional search capabilities with advanced AI-powered content understanding, it offers a powerful and efficient way to access and utilize information. Whether you are a digital marketer seeking to understand customer behavior, a researcher exploring complex datasets, or a business owner looking to improve operational efficiency, Microsoft Cognitive Search provides the tools you need to unlock the full potential of your data. You can explore Microsoft Cognitive Search further and learn about its features and pricing on the Microsoft Azure website. By embracing semantic search, you can transform the way you interact with information, making better decisions and driving innovation.

6. Amazon Kendra Intelligent Search

In today's data-driven world, finding the right information quickly and efficiently is paramount. Traditional keyword-based search often falls short, returning irrelevant results and frustrating users. This is where semantic search comes into play, and Amazon Kendra stands out as a powerful example of its implementation. Amazon Kendra is a highly intelligent enterprise search service powered by machine learning, offering a significant upgrade over conventional search methods. It deserves its place on this list due to its robust capabilities, ease of integration within the AWS ecosystem, and its proven track record of success in diverse industries. If you're looking for a robust, scalable, and intelligent search solution, especially within an AWS environment, Amazon Kendra is worth serious consideration.

(What is it and how does it work?)

Amazon Kendra moves beyond simple keyword matching. It delves into the meaning and context of user queries, leveraging natural language understanding (NLU) to decipher the intent behind the search. Instead of just looking for matching words, Kendra understands synonyms, related concepts, and even the nuances of phrasing. This allows it to surface truly relevant results, even if the user's query doesn't perfectly match the keywords within the documents. For example, a search for "employee onboarding process" could also return results related to "new hire orientation" or "induction program."

Kendra's intelligent answer extraction further enhances the search experience. It can pinpoint the precise answer to a user’s question within a document, rather than simply presenting the entire document. This saves users valuable time and effort, especially when dealing with lengthy reports or complex technical documentation. Imagine asking "What is the company's vacation policy?" and Kendra directly presenting the relevant section of the employee handbook, rather than requiring you to sift through the entire document.

(Examples of Successful Implementation)

Several prominent organizations have successfully implemented Amazon Kendra, demonstrating its versatility and effectiveness. PwC, a leading professional services firm, uses Kendra for internal knowledge management, enabling employees to quickly access the information they need to serve clients effectively. Dow Jones leverages Kendra to enhance its news content search, allowing journalists and researchers to rapidly find relevant articles and information across its vast archives. Thomson Reuters, a global provider of legal information, employs Kendra for legal document discovery, significantly streamlining the research process for legal professionals. These examples showcase the power of Kendra to transform search across various industries and use cases.

(Actionable Tips for Readers)

Implementing Amazon Kendra effectively requires a strategic approach. Start with high-quality, well-structured content. The better organized your data, the easier it is for Kendra to understand and index it. Utilize feedback mechanisms to continually refine the search accuracy. Kendra learns from user interactions, so encouraging feedback helps it become even more accurate over time. Leverage pre-built connectors for common data sources like SharePoint, Salesforce, and ServiceNow to simplify integration. Finally, implement proper access controls and permissions to ensure data security and compliance.

(When and Why to Use This Approach)

Amazon Kendra is particularly well-suited for organizations operating within the AWS ecosystem. Its tight integration with other AWS services simplifies deployment and management. It's ideal for businesses dealing with large volumes of complex data, where traditional search methods struggle to deliver relevant results. If your organization needs to empower employees, customers, or partners with a powerful and intuitive search experience, Kendra offers a compelling solution.

(Pros and Cons)

While Kendra offers significant advantages, it’s crucial to understand the potential drawbacks. On the plus side, it requires minimal machine learning expertise to implement, seamlessly integrates with other AWS services, automatically learns and improves over time, and boasts enterprise-ready security and compliance features. However, it is limited to the AWS ecosystem, potentially incurring higher costs compared to traditional search solutions, and may require significant setup for complex environments.

(Link to Website)

You can explore Amazon Kendra further on the official AWS website: https://aws.amazon.com/kendra/

Amazon Kendra represents a significant leap forward in enterprise search. Its ability to understand natural language, extract precise answers, and learn from user interactions makes it a valuable tool for any organization seeking to unlock the full potential of its data. By following the tips provided and carefully considering the pros and cons, you can effectively leverage Amazon Kendra to transform your search experience and empower your users with the information they need to succeed.

7. Weaviate Open-Source Vector Database

Are you seeking a powerful, flexible, and transparent solution for building semantic search applications? Look no further than Weaviate, an open-source vector database that’s changing the game. Weaviate empowers you to unlock the true potential of your data by understanding the meaning behind words and concepts, delivering highly relevant search results that traditional keyword-based searches simply can't match. This is a prime example of the power of semantic search in action, and why it deserves a prominent place on this list.

Weaviate stands apart from traditional databases by focusing on vector embeddings. Instead of relying solely on keyword matching, Weaviate converts data objects into vector representations that capture their semantic meaning. This allows for similarity-based searches, returning results related in meaning even if they don’t share the exact same keywords. Think of it like searching for “best running shoes for marathon training” and getting results that include “top long-distance running footwear” – a level of understanding that traditional search struggles to achieve. This is why Weaviate represents a significant advancement in the realm of semantic search examples.

This innovative approach is further enhanced by Weaviate’s use of GraphQL APIs. GraphQL provides a flexible and efficient way to query and interact with the database, giving developers fine-grained control over the data they retrieve. This streamlined query interface makes it easier to build complex semantic search applications with features like filtering, sorting, and pagination. This combination of vector search and GraphQL makes Weaviate an incredibly versatile tool.

Weaviate offers several key features that solidify its position as a leading semantic search solution:

- Automatic Vectorization: Weaviate simplifies the process of creating vector embeddings with built-in support for various vectorization models, including transformers like Sentence-BERT and CLIP. This eliminates the need for complex manual vectorization processes, saving developers valuable time and resources.

- Hybrid Search: Combine the power of vector search with traditional keyword-based search for optimal results. This allows you to leverage existing data structures while benefiting from the enhanced relevance of semantic search. This hybrid approach ensures comprehensive results, capturing both precise matches and semantically related information.

- Multi-Modal Support: Weaviate isn’t limited to text. It can handle various data types, including images, audio, and video, enabling truly multi-modal semantic search applications. Imagine searching for "pictures of happy dogs playing in a park" and getting precisely that, regardless of the image tags or descriptions.

- Graph-like Data Relationships: Weaviate allows you to define relationships between data points, creating a knowledge graph that enriches the search experience. This enables more contextualized results and facilitates complex queries based on connections between concepts.

Weaviate is truly an open-source solution, offering flexibility and transparency for developers. It can be self-hosted, giving you complete control over your data and infrastructure, or deployed in the cloud via Weaviate’s commercial offerings. This makes it suitable for a wide range of use cases, from small research projects to large-scale enterprise applications.

Real-world examples demonstrate the practical power of Weaviate:

- Rocket.Chat: Uses Weaviate for enhanced message search within their platform, making it easier for users to find the information they need within a vast conversation history.

- Wevo: Leverages Weaviate for user feedback analysis, gaining deeper insights from customer comments and reviews by understanding their semantic meaning.

- Numerous Startups: Weaviate is being adopted by innovative startups for building advanced product recommendation engines, delivering personalized and highly relevant product suggestions to their customers.

Ready to harness the power of Weaviate for your own semantic search projects? Here are some actionable tips:

- Choose the right vectorization model: Select the model that best suits your data type and search requirements. Weaviate provides several options to choose from, each with its own strengths and weaknesses.

- Design your schema strategically: Leverage Weaviate's graph-like relationship features to create a well-structured knowledge graph that enhances search accuracy and context.

- Experiment with hybrid search: Combine vector and keyword search to find the optimal balance for your specific application.

- Factor in the GraphQL learning curve: While GraphQL offers powerful advantages, ensure your team has the necessary skills or allocate time for training.

While Weaviate offers significant advantages, it's important to be aware of potential drawbacks. Implementing Weaviate requires a certain level of technical expertise, and its ecosystem is smaller than some established solutions. You might also need additional tooling for certain enterprise features. However, the active developer community and comprehensive documentation provide valuable support and resources.

For more information and to get started with Weaviate, visit their website: https://weaviate.io/. Whether you're a digital marketer seeking deeper audience insights, a researcher exploring complex data sets, or an entrepreneur building the next generation of search applications, Weaviate provides a powerful and flexible platform for unlocking the true potential of semantic search.

Semantic Search Solutions Comparison

| Solution | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|---|---|---|---|---|---|

| Google's BERT-Powered Search | Moderate to high — advanced NLP models | High — significant computational resources | High accuracy for complex, conversational queries | Conversational search, ambiguous query understanding | Strong context understanding; multi-language support |

| Elasticsearch with Vector Search | High — complex setup and config | High — resource-intensive, scalable cluster | Real-time, scalable semantic and keyword hybrid search | Enterprise content and code search, location-based queries | Highly scalable; flexible hybrid search |

| OpenAI's Embedding-Based Search | Low to moderate — API integration | Moderate — API usage costs, external storage | State-of-the-art semantic understanding and similarity search | Product recommendations, document search, AI-powered search | Easy API integration; consistent model improvements |

| Pinecone Vector Database Search | Low — fully managed service | Moderate to high — costs scale with usage | Fast, accurate real-time vector similarity search | Real-time semantic search, large-scale vector queries | No infra management; fast query performance |

| Microsoft Cognitive Search | Moderate — AI skill pipeline configuration | Moderate to high — Azure ecosystem reliance | AI-powered content enrichment and semantic understanding | Enterprise document search, multi-modal data exploration | Strong security and compliance; Microsoft ecosystem integration |

| Amazon Kendra Intelligent Search | Low — minimal ML expertise required | Moderate to high — AWS service costs | Accurate natural language answers from varied sources | Enterprise knowledge bases, internal search systems | Easy AWS integration; auto-learning from feedback |

| Weaviate Open-Source Vector DB | High — requires technical expertise | Moderate — self-hosted or cloud options | Flexible hybrid semantic search with graph-like data models | Developer-driven semantic apps, hybrid vector + traditional search | Open-source; GraphQL interface; deployment flexibility |

The Future of Search is Semantic

From Google's advancements with BERT to the innovative applications of vector databases like Pinecone and Weaviate, these semantic search examples showcase a paradigm shift in how we interact with information. We've seen how understanding the nuances of language, context, and user intent allows for more accurate and relevant search results, far surpassing the limitations of traditional keyword-based searches. These insights are crucial for anyone working with digital content, from marketers crafting targeted campaigns to researchers delving into complex datasets. Mastering these concepts will empower you to unlock deeper insights, streamline workflows, and ultimately connect with your audience or information more effectively. The ability to leverage the true meaning behind queries is no longer a futuristic concept; it's the present, and it's rapidly shaping the future of how we discover, understand, and utilize information.

The power of semantic search is transforming how we interact with our digital world. Ready to experience the future of search firsthand? Explore Reseek, a powerful tool that leverages semantic search to help you effortlessly organize and access your digital assets. Visit Reseek today and discover a smarter way to search.